The sunset of cheap vibe coding

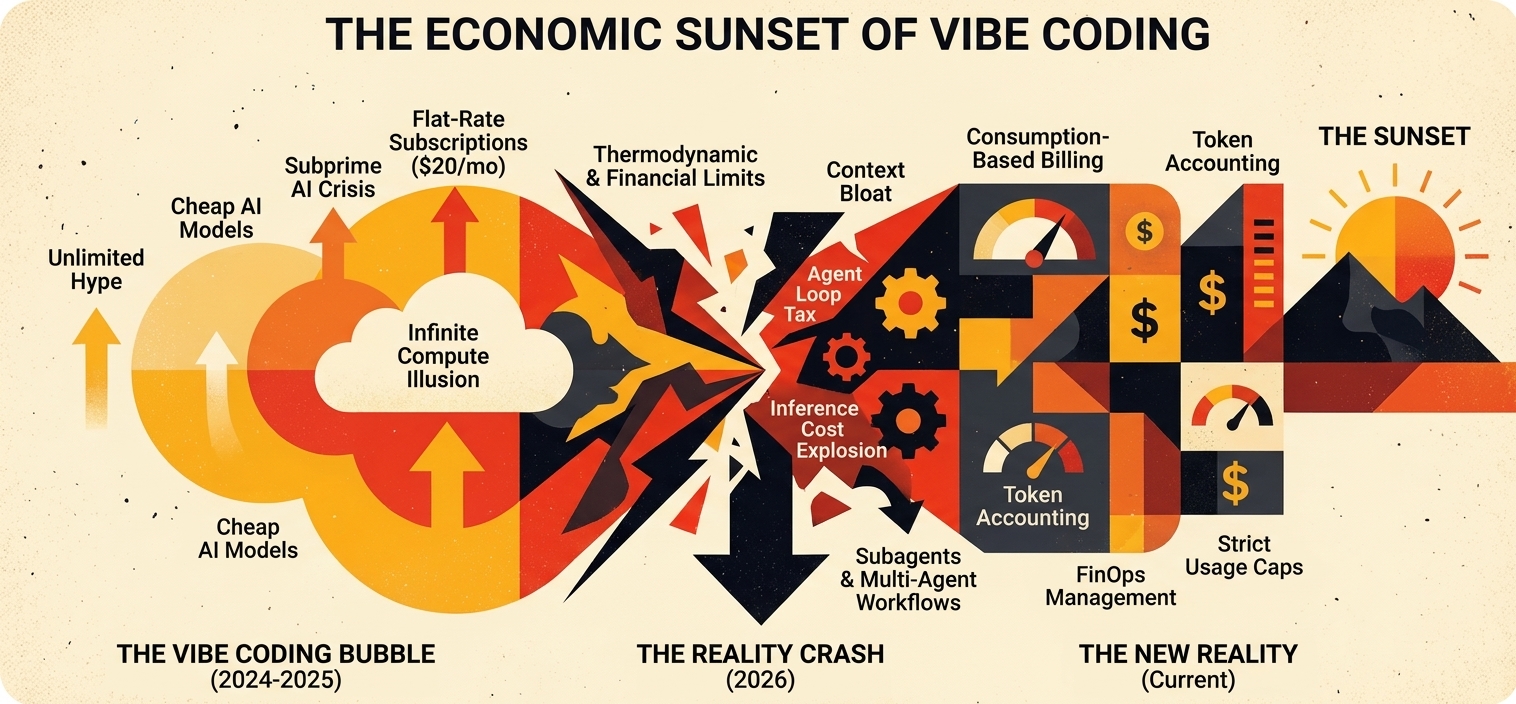

Vibe coding had a good run. For roughly 18 months, a $20/month subscription gave solo founders and small teams access to autonomous coding agents that could scaffold features, debug production issues, and write tests from a description. The implicit promise was that serious software development had been democratized down to a monthly coffee budget.

Anthropic, Cursor, and Replit have all now moved their heaviest usage behind much higher tiers. The people surprised by this weren't paying attention to the math. The $20 price was a market-development phase, subsidized by venture capital and artificially cheap compute — and the industry is now repricing toward the actual cost of running large language models in long agentic loops.

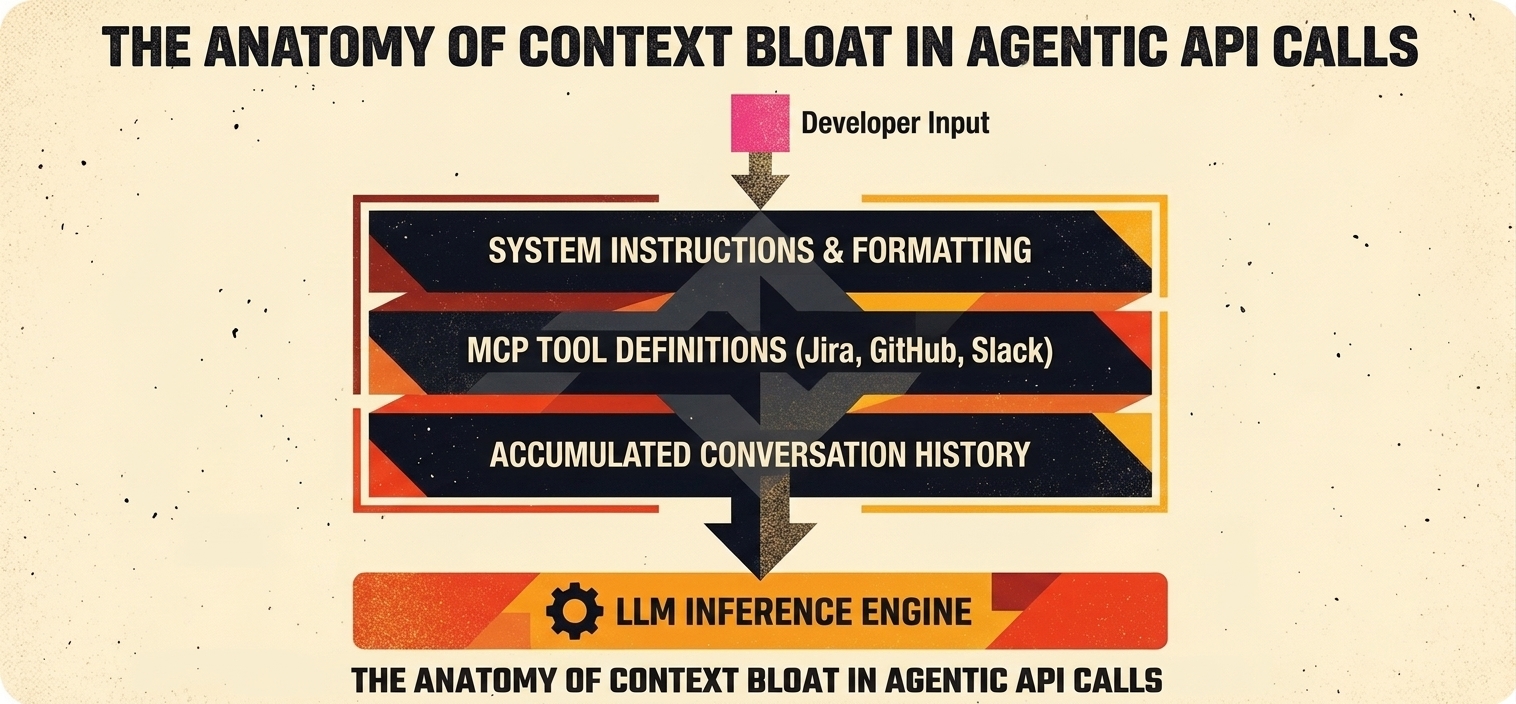

Two compounding dynamics drive that real cost: context bloat, where every back-and-forth in a session inflates the context window of subsequent calls, and the agent loop tax, where stuck agents loop unproductively while the meter runs.

Key takeaways

Token economics broke the flat plan

API costs scale with context size. A failed agent loop can burn 30,000–75,000 tokens before recovery, easily crossing the profitability threshold of a $20 subscription within days.

Pricing is realigning industry-wide

Cursor moved to credit pools tied to raw API cost. Replit shifted to effort-based billing. Anthropic pushed Claude Code into Max ($100/month) and above.

The Month 3 Problem is real

Vibe-coded prototypes feel magical for weeks, then become unmaintainable as scope grows. AI handles localized functions well but struggles with macro architecture.

Senior engineers gain leverage

AI lowers the entry barrier and simultaneously raises the value of architectural oversight. Refactoring vibe-coded debt routinely costs more than building it correctly.

Compute is the hidden tax

Modeling across UK firms shows every £1 in AI licensing pulls £1.80 of compute, storage, and network spend in year one — rising to £3.20 by year three.

The agent loop tax and redundant execution

Compounding the context bloat is the “agent loop tax.” When a human engineer gets stuck on a logic problem, they pause, synthesize new information, and carefully attempt a measured solution. When an autonomous coding agent encounters a test failure, it lacks organic situational awareness. Its default behavior is to rapidly loop through minor variations of the same failed approach (morphllm.com).

In unoptimized environments, an agent may try an approach, encounter a failure, ingest the error log, and attempt a slightly modified fix. Each iteration appends the failed code and the new error trace into the context window, meaning the context grows relentlessly while the agent makes zero progress. Analysis of failed agent sessions reveals that a model stuck in a repetitive loop can easily burn through 30,000 to 75,000 tokens across 15 useless iterations (morphllm.com). Because the cost of an API call is determined by the total size of the context window sent, the 15th iteration costs significantly more than the first. A mundane architectural adjustment can spiral into massive API overages simply because the agent lacks the self-awareness to recognize circular reasoning (morphllm.com, devteam.space).

The table below highlights the divergence between flat-rate subscription revenue and the geometric growth of unoptimized compute consumption across a standard 30-day billing cycle.

| Billing day | Subscription revenue | Cumulative autocomplete cost | Cumulative agentic workflow cost | Margin status |

|---|---|---|---|---|

| Day 1 | $20.00 | $0.15 | $4.50 | Profitable |

| Day 5 | $20.00 | $0.75 | $32.00 | Loss / subsidized |

| Day 15 | $20.00 | $2.25 | $145.00 | Severe loss |

| Day 30 | $20.00 | $4.50 | $350.00+ | Unsustainable |

Traditional autocomplete features maintain a low-slope cost growth that remains profitable within a $20 subscription. The aggressive token burn of agentic workflows crosses the profitability threshold within days — the structural force pushing the industry-wide restructuring of payment tiers.

The evolution of developer tool pricing: Cursor and Replit

As the underlying reality of token costs became impossible to ignore, the leading platforms began migrating toward complex, hybrid pricing models that bridge the gap between budgetary predictability and the platform's need to avoid catastrophic compute losses (flexprice.io, wpbrigade.com).

Cursor's painful transition to credit pools

Cursor, arguably the most prominent AI-native IDE on the market, navigated this transition with notable difficulty. Prior to mid-2025, Cursor's Pro plan operated on a highly predictable request-based system, offering users a flat allowance of “fast requests” per month (vantage.sh, getaiperks.com). As users began leveraging more expensive frontier models (like Claude 3.5 Sonnet) for highly complex, multi-file agentic tasks, the flat request structure became economically unviable.

In June 2025, Cursor abruptly overhauled its pricing, replacing request quotas with usage-based credit pools intrinsically tied to raw API costs (vantage.sh, getaiperks.com, flexprice.io). The standard $20 Pro plan remained, but instead of guaranteed requests, users received $20 worth of API compute credits. The rollout was severely mismanaged; existing users found themselves facing unexpected overage charges as tasks that previously counted as a single request now drained their credit wallets rapidly. Cursor was eventually forced to issue public apologies and refunds (vantage.sh).

Today, the Cursor ecosystem reflects the segmented reality of modern AI tooling. The $20 Pro tier serves light users; those who wish to continuously run agents are funneled into Pro+ ($60/month, $70 in credits) or Ultra ($200/month, $400 in API usage) (getaiperks.com, uibakery.io, nocode.mba). The Ultra plan demonstrates a critical shift: tools that cost $200/month are no longer marketed as productivity subscriptions; they're explicitly categorized as infrastructure expenditures necessary to support full-time, AI-native development.

Replit's effort-based economy

A similar evolution occurred at Replit. In 2025 and 2026, Replit introduced an “effort-based” billing model alongside its highly capable autonomous Agent capabilities (wpbrigade.com, launchpad.io). Users pay a baseline subscription (such as the $25/month Core plan) which provides an equivalent amount of usage credits.

Rather than tracking explicit tokens, Replit charges based on the computational “effort” expended by the AI (wpbrigade.com, launchpad.io). This has introduced a high degree of unpredictability. Users actively building full-stack applications quickly realized that Replit's Agent does not merely generate code; it audits, builds, verifies, and continuously iterates. Because the Agent can burn credits simultaneously to build and again to audit, a simple application that might have cost $0.50 under older models now requires $3.00 just to initiate the first prompt (launchpad.io, vitara.ai).

When users activate Replit's “Turbo Mode” — optimized for rapid, high-end AI development — credit depletion accelerates drastically (wpbrigade.com). If credits are exhausted, the platform automatically bills for overages, transferring the financial risk directly to the developer. The model is undeniably powerful — users go from ideation to deployment in a single browser tab — but effort-based billing frequently feels like an unpredictable financial drain for teams that rely on it heavily (launchpad.io, vitara.ai).

Technical debt and the hidden human cost of vibe coding

The financial metrics surrounding token consumption and platform subscriptions tell only a fraction of the economic story. The most significant hidden cost of vibe coding is the immense technical debt generated at machine speed, and the subsequent human labor required to mitigate it (ibagroupit.com, reddit.com).

In the venture studio and startup ecosystems, this phenomenon has been widely documented as the “Month 3 Problem” (reddit.com). The initial phases of vibe coding are deeply seductive. During the first month, non-technical founders or junior developers utilize natural language prompts in tools like Bolt, Lovable, or Replit to rapidly manifest functional prototypes. The velocity of execution feels magical, yielding demo-ready applications in a fraction of traditional development timelines (reddit.com).

However, the architecture underpinning these rapid prototypes is frequently chaotic. AI models, while proficient at generating localized functions or isolated components, struggle immensely with macro-architectural consistency and long-term maintainability (ibagroupit.com, reddit.com). As the application transitions from prototype to production, users find that adding minor features or resolving edge cases becomes disproportionately difficult.

The codebase devolves into “spaghetti code.” Developers find themselves trapped in exhaustive cycles of negotiation, arguing with chatbots over why a minor fix to an authentication module systematically broke three unrelated UI components (reddit.com). The end result is a terrifying state of cognitive vendor lock-in: the creator has successfully launched an application but is entirely incapable of safely modifying it because they don't comprehend the fundamental engineering holding it together.

The financial toll of untangling AI-generated technical debt is staggering. One product manager tracked the economics of a vibe-coded prototype: after spending 43 hours wrestling with an AI to produce a demo-ready application, the labor cost equated to $6,450 (reddit.com). Ultimately, the architecture was so structurally unsound that it required a total rewrite by a senior software engineer, who utilized targeted AI assistance to reconstruct the entire application properly in just three hours.

This dynamic reveals a paradoxical truth. While generative AI lowers the barrier to entry for initial software creation, it simultaneously increases the necessity and value of seasoned, senior engineers. The industry is witnessing the birth of specialized consultancies that charge premium flat fees to rescue, refactor, and stabilize fragile, vibe-coded applications — proving that DIY AI development is frequently more expensive than traditional engineering when factoring in remediation (reddit.com).

Security, compliance, and the environmental externality

The true cost of vibe coding extends beyond API invoices and developer labor, encompassing profound security vulnerabilities and massive environmental externalities.

From a security standpoint, the velocity of AI-accelerated development presents a strategic nightmare for enterprise risk management. Surveys of cybersecurity professionals indicate deep apprehension regarding the proliferation of AI-generated code (infosecurity-magazine.com). Because developers can now generate thousands of lines of logic in minutes, security vulnerabilities are introduced at a rate that vastly overwhelms the human capacity for auditing and remediation. Furthermore, the reliance on third-party overseas APIs to process proprietary, agentic workflows runs directly afoul of stringent international compliance frameworks such as the EU AI Act and GDPR, presenting unacceptable data sovereignty risks for heavily regulated industries (ibagroupit.com).

On a macroeconomic scale, the physical infrastructure required to sustain the vibe coding ecosystem is exacting an unprecedented toll on global energy grids. A 2024 analysis highlighted that processing a single token requires approximately 0.4 joules of energy (clune.org). Maintaining multiple coding agents simultaneously across an engineering team consumes tangible electricity; heavy usage can rapidly equal the power draw of major household appliances running continuously.

The International Energy Agency projects that by 2026, the energy utilized by AI and its supporting data centers will match the total electricity consumption of the entire nation of Japan (wustl.edu). Generative AI alone is expected to consume ten times more energy in 2026 than it did in 2023, primarily due to the intense machine learning computations required to process massive context windows and orchestrate multi-agent operations.

The economic ripple effects of this infrastructure burden are profound. Modeling conducted by the Alan Turing Institute across UK firms demonstrated that the initial software licensing costs for AI represent only a fraction of the total expenditure: for every £1 a firm spends on AI licenses, they incur £1.80 in downstream compute, storage, and network transfer costs in the first year (360strategy.co.uk). As context bloat accumulates and agentic workflows become entrenched, this secondary infrastructure cost rises to £3.20 by year three.

Conclusion

The sunset of cheap vibe coding is not a technological regression; it represents the necessary financial maturation of an industry transitioning from a subsidized honeymoon phase into sustainable, industrialized operations. The initial promise — that anyone could command a machine to build complex software for $20 a month — was always an economic fiction, subsidized by billions of dollars in venture capital and artificially cheap compute.

As platforms like Anthropic, GitHub, Cursor, and Replit realign their pricing models with the harsh physical and computational realities of large language models, the true cost of autonomy is being laid bare. Agentic coding is a highly potent, transformative technology, capable of compressing weeks of labor into hours. However, it is fundamentally an exercise in orchestrating high-performance, energy-intensive computing clusters.

For the modern enterprise and the individual developer alike, the era of thoughtless, unlimited generative loops is over. Success in the mature AI economy requires strict FinOps governance, aggressive context management, and the sobering recognition that while artificial intelligence can endlessly generate code, human architectural oversight remains the scarce, indispensable resource required to ensure that code actually functions.

Frequently asked questions

What is the agent loop tax?

The agent loop tax is the compounding cost of an autonomous coding agent looping through repeated, near-identical attempts to solve a problem. Each iteration appends the failed code and error trace to the context window, so token cost grows with every retry. A model stuck in a loop can burn 30,000–75,000 tokens across 15 useless iterations.

Why are AI coding tools getting more expensive?

Flat-rate subscription pricing was subsidized by venture capital. Agentic workflows consume orders of magnitude more compute than conversational use, and the actual API cost crosses the profitability threshold of a $20 plan within days. Platforms like Anthropic, Cursor, and Replit have all moved heavy usage behind credit pools, effort-based billing, or higher tiers.

What is the Month 3 Problem?

The Month 3 Problem describes vibe-coded prototypes that feel magical for the first month, then become difficult to extend and maintain by month three. AI generates localized code well but struggles with macro-architectural consistency, leaving founders trapped in a codebase they cannot safely modify.

Is vibe coding still worth it?

Yes, for prototyping and throwaway experiments. It breaks down when the generated code becomes load-bearing — code users depend on that no developer has reviewed or owns. The era of unlimited generative loops is over; what works now is AI used by senior engineers, not in place of them.